A associated concept is AI explainability, which focuses on understanding how an AI mannequin arrives at a given end result. The Educational Administration System, a coaching platform with a number of advantages, is gaining in reputation with many companies. Finally, blockchain is gaining floor, notably to secure certifications and reinforce the credibility of coaching programs. In abstract, Frappe LMS emerges as a robust, modern answer for organizations trying to renew their educational methods, offering flexibility, customization and an optimized person expertise.

What Are The Challenges Of Large Language Models?

This article tells you every little thing you have to find out about giant language fashions, including what they are, how they work, and examples of LLMs in the real world. Due to the challenges confronted in training LLM switch learning is promoted closely to get rid of the entire challenges discussed above. Due to this only Immediate Engineering is a completely new and hot subject in lecturers for people who find themselves looking ahead to utilizing ChatGPT-type fashions extensively.

This weight signifies the importance of that enter in context to the rest of the enter. In different words, models not need to dedicate the same https://www.globalcloudteam.com/ attention to all inputs and may concentrate on the elements of the enter that truly matter. This illustration of what elements of the enter the neural network wants to concentrate to is learnt over time because the model sifts and analyzes mountains of data. Driven by deep studying algorithms, these AI models have taken the world by storm for his or her outstanding capacity to generate human-like textual content and carry out a variety of language-related tasks. The common architecture of LLM consists of many layers such because the feed ahead layers, embedding layers, consideration layers.

AI21 Wordspice suggests changes to authentic sentences to improve fashion and voice. The earliest networked learning system was the Plato Learning Administration system (PLM) developed within the Nineteen Seventies by Management Data Corporation. Anencoder converts enter textual content into an intermediate illustration, and a decoderconverts that intermediate representation into helpful textual content. He has pulled Token Ring, configured NetWare and has been known to compile his personal Linux kernel. Reinvent important workflows and operations by adding AI to maximize experiences, real-time decision-making and business worth.

- To guarantee accuracy, this process entails coaching the LLM on a massive corpora of text (in the billions of pages), allowing it to study grammar, semantics and conceptual relationships through zero-shot and self-supervised studying.

- LLMs will also proceed to expand in terms of the enterprise applications they will handle.

- They can rapidly scan giant volumes of authorized paperwork, extract relevant clauses, summarize legal precedents, and determine inconsistencies, saving time and reducing the workload for lawyers.

- At Present, we’re happening a deep dive into what LLMs are, how they work, how one can implement them with automation and examples of how organizations can benefit from them.

- The canonical measure of the efficiency of an LLM is its perplexity on a given text corpus.

- These manual processes, often error-prone and time-consuming, demanded hours of effort per doc.

This has led to a quantity of lawsuits, in addition to questions about the implications of utilizing AI to create art and different creative works. Fashions could perpetuate stereotypes and biases which would possibly be present in the data they are trained on. This discrimination might exist within the type of biased language or exclusion of content about folks whose identities fall outdoors social norms. To address the present limitations of LLMs, the Elasticsearch Relevance Engine (ESRE) is a relevance engine built for synthetic intelligence-powered search applications. With ESRE, builders are empowered to build their very own semantic search utility, utilize their very own transformer fashions, and mix NLP and generative AI to boost their clients' search experience. Transformer models work with self-attention mechanisms, which enables the mannequin to learn more rapidly than conventional fashions like long short-term memory fashions.

Parameters are a machine studying time period for the variables current within the model on which it was skilled that can be utilized AI Robotics to infer new content material. Be Taught the means to incorporate generative AI, machine learning and basis models into your small business operations for improved performance. LLMs are a category of foundation models, which are trained on enormous quantities of data to supply the foundational capabilities wanted to drive multiple use cases and functions, as nicely as resolve a multitude of tasks.

Real-world Business Use Of Llms

Massive language fashions work by utilizing deep learning strategies to deal with sequential data. They consist of a quantity of layers of neural networks that may be fine-tuned as you train them. These layers also include consideration mechanisms, which concentrate on specific components of the datasets. A large language mannequin (LLM) is a deep learning algorithm that may carry out a variety of pure language processing (NLP) tasks.

For occasion, traditional benchmarks like HellaSwag and MMLU have seen fashions attaining excessive accuracy already. The shortcomings of creating a context window larger embody larger computational cost and probably diluting the concentrate on native context, while making it smaller could cause a mannequin to miss an essential long-range dependency. Balancing them is a matter of experimentation and domain-specific issues. In 2025, AI will revolutionize LMSs by optimizing learning paths, offering personalized recommendations and automating assessments. The platform offers superior options for customizing training paths, assigning particular roles and monitoring learners' progress in detail. In phrases of pedagogical features, Canvas provides robust tools for creating quizzes, monitoring student performance and managing learning paths.

For instance, by leveraging LLMs, businesses can automate buyer inquiries, help tickets, and supply personalized answers, all while freeing up priceless human assets to focus on more essential issues. In Accordance to Voiceflow, 58% of shoppers expect companies to offer personalised experiences primarily based llm structure on their past interactions, highlighting the rising demand for AI-driven customer service solutions. Or computer systems can help humans do what they do best—be creative, talk, and create. A author affected by writer’s block can use a big language mannequin to help spark their creativity.

Create And Check Quickly With Datastax Langflow

Open edX, jointly developed by MIT and Harvard, is an open source LMS platform designed to ship a versatile, customizable learning expertise. Its modular construction enables organizations to create tailor-made studying environments, adapted to their specific wants. This course might help you learn the core concepts of AI and the method it affects enterprise selections. In reality, in purely human phrases, what they’re doing isn’t exactly “learning” in any respect. However by coaching your LLM fastidiously, you can get it to do one thing nearer to learning than any machine has been capable of earlier than. Fast ahead to right now, these AI-powered doc agents can now process complex financial documents in simply six minutes — 95% sooner than earlier than.

These tokens are then reworked into embeddings, that are numeric representations of this context. They are ready to do this due to billions of parameters that allow them to capture intricate patterns in language and perform a wide selection of language-related duties. LLMs are revolutionizing applications in various fields, from chatbots and digital assistants to content generation, research help and language translation. It was beforehand standard to report outcomes on a heldout portion of an analysis dataset after doing supervised fine-tuning on the remainder.

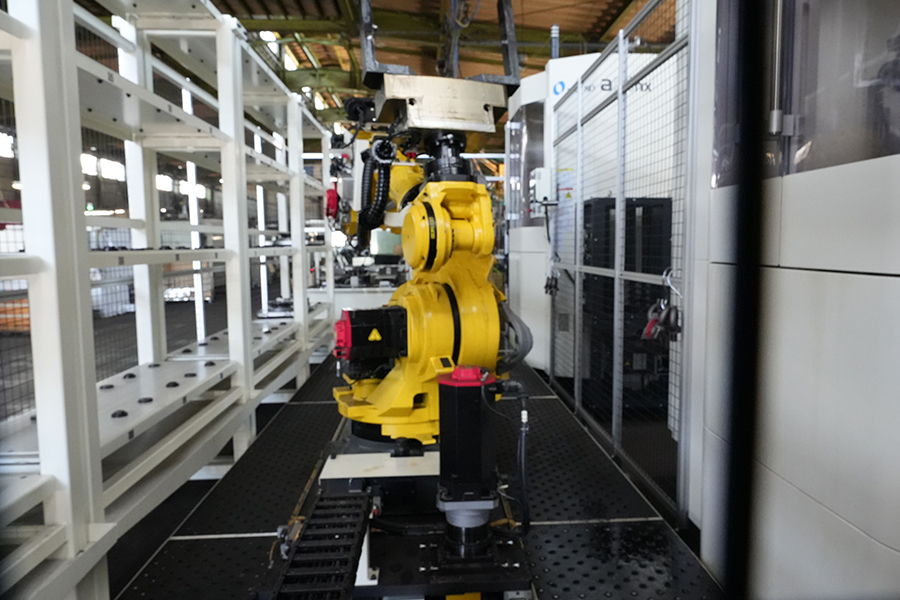

NIDEC OKK HM シリーズ

NIDEC OKK HM シリーズ ロボットシステムR2000-iC/165F

ロボットシステムR2000-iC/165F 森精機 NLX3000

森精機 NLX3000 大昭和精機 ツールプリセッタMAGIS600-EGC50

大昭和精機 ツールプリセッタMAGIS600-EGC50